LLM-Powered Supply Chain Planning: Make Faster, Smarter Calls

Imagine compressing an entire week of supply chain analysis into only a few minutes.

That’s the promise highlighted by MIT professor David Simchi-Levi and coauthors in their recent piece, “How Generative AI Improves Supply Chain Management.” They argue that large language models (LLMs), a category of generative AI, are on track to make it possible. With these tools, companies can fine-tune logistics, lower costs, and react to market changes with a level of speed and clarity that wasn’t feasible before.

Modern information technologies have already transformed supply chains through automated, data-driven decisions, yielding operations that are both leaner and less costly. Yet executives still spend substantial time interpreting system recommendations, probing scenarios, and running “what-if” analyses work that often depends on experts to clarify outputs and recalibrate systems.

LLMs, a subset of Generative AI (GenAI), reduce that dependency. They can rapidly locate data, surface insights, and evaluate scenarios, enabling leaders to decide faster (cutting timelines from days to minutes) and meaningfully increasing productivity.

Drawing on Microsoft’s experience provisioning servers and related hardware to more than 300 global data centers, the authors outline four core areas for using LLMs strategically.

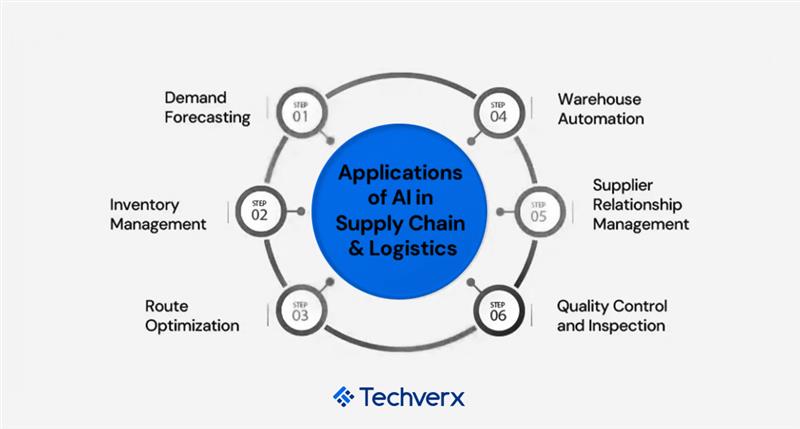

Data exploration and analysis

With LLMs, leaders can pose critical questions in everyday language, such as, “How much could we save sending A to B via C rather than D?” Delivered as a cloud service, the AI can translate the question into technical queries, execute them against the company’s databases (e.g., in SQL), and return clear answers, while preserving data privacy since nothing leaves the organization for a third party.

GenAI can also articulate the logic behind recommendations and provide additional context like trends and patterns.

Consider these examples:

- Responding to demand shifts. Cloud providers like Microsoft manage enormous server demand for services including Azure and Microsoft 365 by creating hardware deployment plans, optimizing spend, and tracking demand changes (“demand drift”). LLMs now automate much of this work, clarifying supply chain choices, flagging mistakes, and producing reports in minutes, tasks that once took planners a full week when done manually.

- Upholding contract obligations. In the automotive sector, original-equipment manufacturers (OEMs) juggle thousands of supplier contracts covering price, quality, lead times, and resilience commitments. By using LLMs to analyze these agreements, one OEM uncovered missed volume-based discounts, recovering millions that manual reviews had overlooked.

Scenario-based queries

LLMs enable planners to ask sophisticated what-if questions (e.g., the cost effects of demand spikes, factory outages, or material price swings) and receive exact, interpretable answers. A planner might pose, “If we shift 30% of production to a new facility, how would shipping delays alter our costs?” The LLM converts that question into quantitative adjustments and returns human-readable conclusions, streamlining the entire analysis cycle.

Microsoft applies this approach to refine server deployment plans and reduce spend while assigning hardware types, ship dates, and data center destinations. Previously, planners wrestled with optimization outputs and spent days collaborating with engineers to examine scenarios. Now, LLMs deliver immediate responses, compressing analysis from days to minutes. (Microsoft’s open-source implementation is available on GitHub.)

Real-time supply chain management

Using LLMs, planners can update mathematical models on the fly to mirror real-world disruptions, like plant shutdowns or supplier delays, without waiting on IT. If a site goes offline, planners can instruct the LLM to rerun optimization, generating revised plans that call out unmet demand, cost implications, and options such as expediting shipments or reallocating inventory, and all of it is communicated in plain language.

Looking forward, LLMs are set to support end-to-end decisions by letting users describe problems in natural language (e.g., production scheduling or inventory allocation). While current models can draft mathematical formulations and recommendations, validating those outputs against messy business reality remains a hurdle. Future progress aims to simplify that verification, making planning faster, more adaptive, and usable by non-technical teams.

LLM adoption and integration

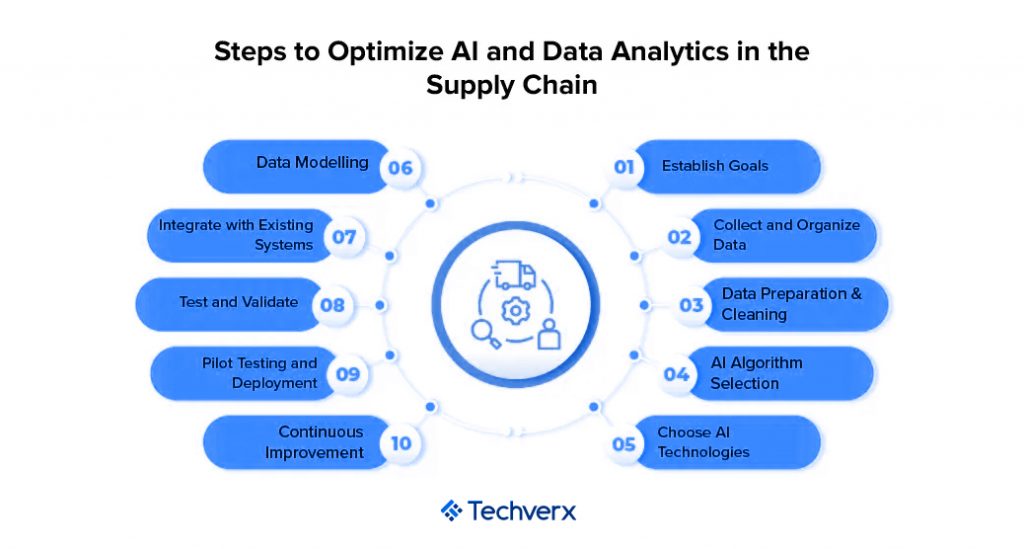

Organizations adopting LLMs for supply chain work should address several challenges to capture full value:

- Clear communication and training. LLMs produce the best results when prompts are precise, so users must learn to ask focused, specific questions. Companies should also equip managers with a practical understanding of the technology’s capabilities and limits to ensure it’s applied appropriately.

- Accuracy via confirmation and controls. Because LLMs can be fallible, firms need safeguards, such as domain-tailored examples, validation steps, and fallback behaviors, to catch errors. Even so, verifying complex AI-generated models remains an open problem that calls for ongoing research.

- Shifting toward collaboration. By automating routine tasks, LLMs free planners and executives to spend more time interpreting insights and collaborating across functions. Leaders should dismantle organizational silos and adapt processes to support these new, cross-functional ways of working.

Techverx at the Forefront of AI & GenAI for Legacy Systems

Techverx leads the practical integration of AI and GenAI into complex, brownfield supply chain landscapes, without forcing rip-and-replace. Our approach is engineered for real-world enterprises running ERPs (e.g., Dynamics 365, SAP, Oracle), WMS/TMS, and decades-old custom apps:

- Modernization without disruption

We apply the strangler-fig pattern and domain-driven interfaces to wrap legacy logic with secure APIs, enabling LLM copilots and optimization services to sit alongside existing systems. Zero-downtime rollouts, canary releases, and blue-green deploys keep operations stable while new intelligence comes online. - Data foundation built for LLMs

We establish governed data pipelines and feature stores, unify siloed records, and enable retrieval-augmented generation (RAG) with vector indexes, so LLMs answer with current, context-rich supply, demand, and contract data. - Decisioning you can trust

Guardrails, prompt validation, and policy checks are baked in. We pair optimization solvers with LLM reasoning (for explanations and what-if narratives) and keep humans-in-the-loop for material decisions like re-planning, expedites, and allocation changes. - Enterprise-grade MLOps & DevSecOps

Using Git-based workflows, CI/CD, automated tests, and observability, we version data, prompts, and models; monitor drift; and roll back safely. Security and compliance controls govern every integration point. - Cloud-native, vendor-ready

As an AWS Advanced Tier Partner, and with deep Microsoft ecosystem experience, we deploy scalable inference, orchestration, and analytics that integrate cleanly with your existing identity, logging, and cost controls. - From pilots to production

We start with targeted use cases, LLM-assisted scenario analysis, contract intelligence, demand commentary generation, and scale to end-to-end planning support (inventory, sourcing, network, and S&OP) as value is proven.

Result: you get LLM-powered planning, analysis, and reporting that plug into the systems you already run, turning week-long explorations into minutes, increasing planner capacity, and making decisions clearer, faster, and auditable. If you’re navigating the realities of legacy tech while aiming for GenAI speed, Techverx is built to get you there.

What this looks like in practice: Lessons for supply chain leaders from Techverx × OmniTeq (AThENA)

On a recent build for OmniTeq, Techverx delivered a data ingestion and processing platform (AThENA) that unified data lake management, ETL pipelines, and machine learning across highly regulated domains. The result is a great blueprint for supply chain teams that need speed, accuracy, and scale without tearing out existing systems.

1) Clean, trustworthy data, fast

LLM- and NLP-powered preprocessing turned messy, multi-source raw data into analysis-ready assets, achieving 95% accuracy in data preprocessing. In supply chains, that means cleaner EDI feeds, telemetry, WMS/TMS logs, and ERP transactions, so your LLM copilots and optimizers work off reliable facts (not noise).

2) Always-on data consistency (bye, manual rework)

Robust ETL pipelines (with scheduling/orchestration) enforced consistent transformations and reduced human error, enhancing data consistency with automated ETL workflows and boosting operational efficiency by reducing manual errors. For planners, that translates to fewer spreadsheet reconciliations and faster, audit-ready planning cycles.

3) Real-time logistics anomaly detection

Models trained to detect GPS anomalies in real time (e.g., detours, prolonged idling, route deviations) create immediate value for fleet operations, flagging exceptions before they become missed SLAs or chargebacks and tightening ETA reliability.

4) Privacy-preserving collaboration

Data anonymization enabled analysis on sensitive datasets without exposing protected attributes. In multi-tier supply networks, this unlocks cross-partner analytics (demand signals, service levels, dwell times) while respecting compliance constraints and customer contracts.

5) Elastic scale, no rip-and-replace

A serverless model and cloud-native stack scaled seamlessly to large data volumes, ideal for seasonal peaks, new lanes, and network expansions, without disrupting core ERP/WMS/TMS. This is how you add GenAI decisioning beside legacy apps, not instead of them.

6) Decision velocity (and adoption)

With the foundation in place, teams reported a 70% increase in data-driven decision-making, exactly the lift supply chain orgs need for what-ifs (demand spikes, lane changes), tactical replans, and weekly S&OP meetings. LLMs can narrate the “why” behind the math, making plans more explainable, and more adoptable.

Where supply chains feel the impact

- Demand & inventory: Cleaner signals + LLM commentary reduce forecast debate time and help rebalance inventory faster.

- Transportation & last mile: Real-time GPS anomaly detection cuts dwell/idle costs and protects on-time performance.

- Procurement & contracts: Structured, high-accuracy data enables LLM contract intelligence (discounts, SLAs) with safe sharing via anonymization.

Network & S&OP: Automated pipelines keep models fresh; LLMs explain trade-offs so executives can approve plans quickly.

A pragmatic 12-week path (inspired by the AThENA build)

- Weeks 1–3 | Data readiness sprint: Connect sources, stand up ETL, and implement LLM/NLP preprocessing for one priority flow (e.g., shipments + GPS). Target: measurable accuracy gains (aim for AThENA-level ~95% preprocessing accuracy).

- Weeks 4–6 | First ML/LLM use case: Deploy real-time anomaly detection on a pilot lane; wire alerts into ops. Track exception rate, dwell time, and SLA adherence. (Maps to AThENA’s real-time anomaly detection).

- Weeks 7–9 | Explainable planning: Layer an LLM “copilot” to narrate optimization outputs and run scenario queries in natural language; measure meeting time saved and actionability (70% lift in data-driven decisions is a solid benchmark).

- Weeks 10–12 | Scale & harden: Shift workloads to serverless/cloud-native orchestration, add anonymization for partner sharing, and define SLIs/SLOs for pipelines (AThENA’s scalable solution for large volumes + automated ETL pattern).

Why Techverx

Techverx engineered the AThENA platform end-to-end, team of software engineers, QA, and PM, delivering in a 3-month fixed-scope window with a modern stack (Python, Apache Spark, PyTorch, MLflow/Airflow, Kubernetes, Redshift, serverless). That combination, clean data, automated pipelines, real-time ML, and privacy-first design, is exactly what supply chain orgs need to plug LLMs into legacy systems and start making faster, smarter decisions now.

Ready to build your team of tomorrow? Talk to a Techverx consultant today

Hiring engineers?

Reduce hiring costs by up to 70% and shorten your recruitment cycle from 40–50 days with Techverx’s team augmentation services.

Related blogs